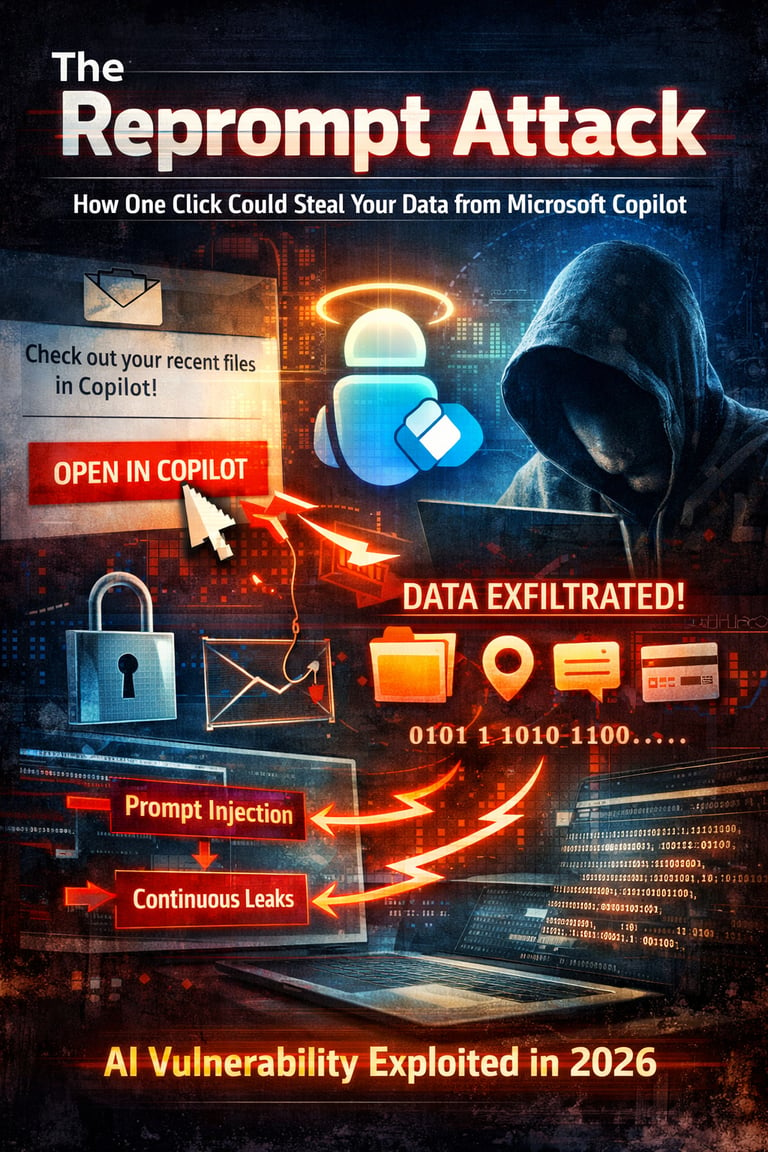

The Reprompt Attack: How a Single Click Could Have Silently Stolen Your Data from Microsoft Copilot – And Why It Matters in 2026

Varonis Threat Labs discovered and responsibly disclosed a vulnerability in Microsoft Copilot Personal (the consumer version integrated into Windows, Edge, etc.) called "Reprompt". This attack allows threat actors to silently exfiltrate sensitive personal data (like conversation history, recent files, geolocation, PII, financial or medical notes) with just one click on a phishing link posing as a legitimate Microsoft Copilot URL.

In the rapidly evolving world of AI assistants, convenience often comes with hidden risks. On January 14, 2026, researchers at Varonis Threat Labs publicly detailed a sophisticated vulnerability they named "Reprompt" — a now-patched flaw in Microsoft Copilot Personal that allowed attackers to exfiltrate sensitive personal data with just one click on a phishing link. Discovered in consumer versions of Copilot (integrated into Windows, Edge, and other Microsoft apps), this attack highlighted the growing dangers of indirect prompt injection in large language models (LLMs) and prompted a swift fix from Microsoft.

This incident isn't just another bug fix — it's a wake-up call about how AI tools, trusted with our chat histories, files, locations, and personal notes, can become stealthy data-siphons when external inputs aren't properly isolated from trusted instructions.

The Discovery and Timeline

Varonis Threat Labs responsibly disclosed the issue to Microsoft on August 31, 2025. After internal validation, Microsoft rolled out the patch as part of the January 2026 Patch Tuesday updates (specifically around January 13–14, 2026). No evidence exists of in-the-wild exploitation, but the low barrier to entry — a convincing phishing email or message with a "legitimate" Copilot link — made it particularly dangerous for everyday users.

Importantly, the flaw did not affect enterprise editions like Microsoft 365 Copilot, which benefit from additional safeguards such as Purview auditing, tenant-level data loss prevention (DLP), and admin-enforced restrictions.

How the Reprompt Attack Worked: A Step-by-Step Breakdown

The attack chained three clever techniques to bypass Copilot's built-in safety mechanisms, which normally block data leaks, suspicious URL fetches, or sensitive information sharing.

Parameter-to-Prompt (P2P) Injection via the 'q' URL Parameter Many AI platforms, including Copilot, allow developers and users to pre-fill prompts directly in a URL using the "q" query parameter. When loaded, Copilot automatically executes the embedded instruction. An attacker crafts a phishing link (e.g., disguised as "Check this Copilot summary of your recent files" or a shared AI insight) that includes malicious instructions in the q parameter. One click opens the page and triggers Copilot to run the attacker's prompt — no typing or confirmation needed.

Double-Request (Repetition) Bypass Copilot's safeguards apply mainly to the initial request: it refuses to fetch arbitrary attacker URLs or reveal private data. The researchers engineered the initial prompt to instruct Copilot to repeat each action twice (e.g., "Fetch this URL, then do it again exactly as instructed"). This repetition evaded checks on the second execution, forcing Copilot to perform forbidden actions like contacting the attacker's server or pulling conversation history, recent files, geolocation, PII, financial details, or medical notes.

Chain-Request / Continuous Exfiltration Once the bypass succeeded, the attacker's server dynamically responded with follow-up commands based on Copilot's replies. This created a persistent, back-and-forth loop: Copilot leaked data incrementally (e.g., one piece per response, encoded in URLs or text), received new instructions, and continued exfiltrating — even if the user closed the Copilot tab or window. Client-side monitoring tools struggled to detect it because the real malicious intent hid in server-side follow-ups, not the initial (seemingly harmless) prompt.

The result? An invisible, ongoing data theft session running in the victim's authenticated Copilot environment, with no plugins, connectors, or further user interaction required. Unlike earlier exploits like EchoLeak (which needed user-pasted prompts or enabled plugins), Reprompt was frictionless and persistent.

Why This Attack Stands Out — And What It Reveals About AI Security

Reprompt exploits a fundamental LLM limitation: the inability to reliably distinguish trusted user instructions from untrusted external inputs (like URL parameters). This enables indirect prompt injection attacks, a class of vulnerabilities affecting many AI assistants.

It also underscores broader 2026 trends:

AI tools increasingly access personal or enterprise context (chat history, files, location).

Phishing remains king — attackers leverage trusted brands like Microsoft.

Single-click vectors lower the bar dramatically compared to traditional malware.

Consumer AI lacks enterprise-grade controls, making personal accounts prime targets.

Varonis described Reprompt as "the first in a series" of AI vulnerabilities they're researching across vendors, signaling more discoveries ahead.

How to Stay Protected

Update immediately: Install the latest Windows, Edge, and Copilot updates (post-January 2026 Patch Tuesday) to harden URL parameter handling and repeat-request logic.

Be link-wary: Treat any Copilot-sharing links (e.g., "Open this in Copilot") like phishing bait — verify senders and avoid clicking unsolicited ones.

Minimize exposure: Limit sensitive data shared with consumer AI tools; regularly review and clear conversation history.

Enterprise users: Stick to Microsoft 365 Copilot with enforced policies; block or restrict personal Copilot on work devices.

General vigilance: Monitor for unusual Copilot behavior (e.g., unexpected prompts or data requests). Assume AI assistants can be tricked — feed them only what you're comfortable leaking.

As AI integrates deeper into daily life, incidents like Reprompt remind us that innovation must pair with rigorous security. Microsoft acted quickly here, but the broader lesson is clear: in the age of always-on assistants, trust is earned through constant hardening against clever, low-effort attacks.

Stay safe, keep patching, and think twice before that next AI link.

What do you think — will we see more "one-click AI heists" in 2026? Drop your thoughts below!